Shadow AI and the Death of Cloud-Native Agents: Why the Monolith is Back

Shadow AI and the Death of Cloud-Native Agents: Why the Monolith is Back

How a localized open-source experiment exposed the fatal flaw in enterprise Kubernetes AI deployments.

The trajectory of artificial intelligence development experienced a profound architectural divergence in early 2026. While enterprise technology conglomerates continued to pursue heavily centralized, cloud-dependent, and highly censored model deployments, a localized, open-source experiment fundamentally altered the landscape of autonomous systems. Originating as a weekend programming endeavor designed to interface a Large Language Model (LLM) with the WhatsApp messaging protocol, the OpenClaw AI agent project rapidly evolved into the fastest-growing open-source repository of the year, amassing over 216,000 GitHub stars.

But the phenomenon surrounding this architecture isn't merely a product of algorithmic advancement. It's a masterclass in system design.

As a Principal Solution Architect, I've seen firsthand how corporate AI initiatives are hampered by liability concerns and stringent governance. They simply cannot provide the intimate system access that workers need. Consequently, we are witnessing a massive "Shadow AI" movement. Workers are bypassing corporate IT to deploy self-hosted agents capable of performing complex, multi-step workflows.

Take, for example, a deployment in the financial sector I recently reviewed. A municipal bond analyst utilized a self-hosted OpenClaw instance bridged to their Outlook email and a Chrome browser extension. By instructing the agent via mobile messaging to retrieve sent emails, cross-reference them with the national municipal bond repository (Emma.msrb.org), and analyze quarterly reports, the analyst automated hours of manual data aggregation into a three-minute autonomous operation.

This monumental leap in productivity for unstructured, knowledge-intensive tasks is powered by a fundamentally different architectural approach. By bypassing the latency and overhead of a distributed Kubernetes orchestration layer, this local-first model executed operations orders of magnitude faster and at a fraction of the enterprise API cost. And for those of us designing enterprise systems, it’s time to take notes.

The Distributed Trap: Why Kubernetes Fails Stateful Agents

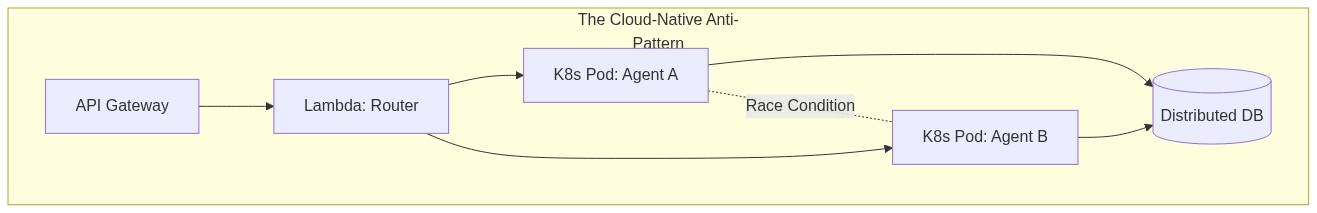

In typical enterprise environments, we default to deploying agentic systems across Kubernetes clusters, relying on serverless lambda functions and distributed databases. It’s what we know. It’s "cloud-native."

But here is the thing nobody tells you about stateful AI agents: applying standard microservice parallelism to an agent interacting with a local filesystem or shared database leads to catastrophic corruption.

If an orchestration framework allows Agent A to modify a Python script while Agent B simultaneously attempts to run a test suite against that same script, the resulting race condition destroys the integrity of the workspace. Furthermore, distributed architectures introduce severe latency, state synchronization failures, and split-brain scenarios where different components hold divergent views of the agent's environment.

We have spent the last decade decoupling everything. But for autonomous agents that require absolute deterministic adherence to identity and operating procedures, decoupling is a liability.

The Gateway Architecture: Local-First Monolithic Control

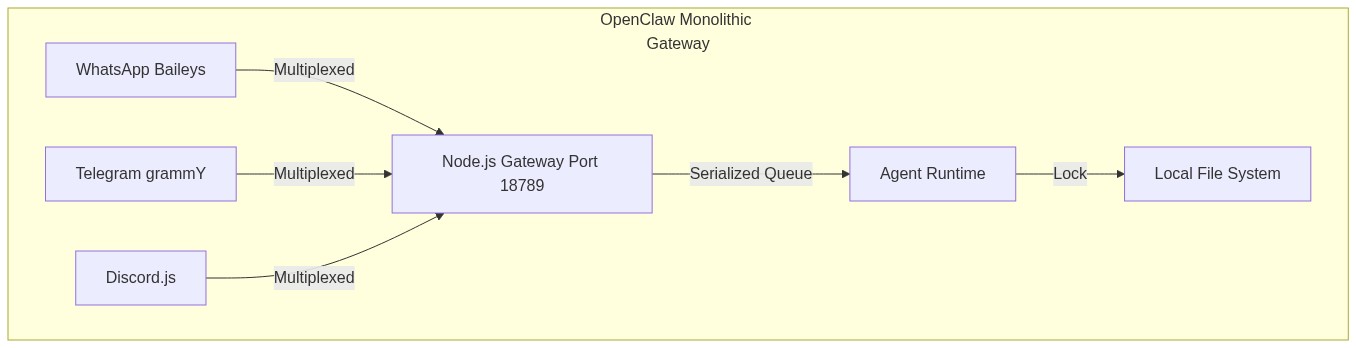

The foundational architectural decision that separates OpenClaw from traditional cloud-native AI deployments is the complete rejection of distributed microservices in favor of a monolithic, local-first control plane.

To eliminate the failure modes of distributed state, the architecture relies on the Gateway: a single, always-on daemon process that serves as the absolute source of truth for all channel connections, routing logic, and session state.

Addressing the Scaling Elephant

Every architect reading the word "monolith" will immediately ask: How does it scale horizontally? The answer requires a paradigm shift.

You don't scale an autonomous agent system by shattering its control plane into microservices. You scale it by deploying more isolated monoliths. Instead of one massive Kubernetes cluster running 10,000 fragmented agent components for an entire enterprise, you provision 1,000 isolated Gateway instances—one dedicated to each team, user, or specific operational domain. This "fleet of monoliths" approach guarantees absolute state integrity per workspace while scaling horizontally through sheer duplication, not distributed complexity.

By funneling all inbound payloads through a universal protocol translator, the Gateway normalizes the data, stripping proprietary metadata and converting messages, images, and documents into a unified internal schema before passing them to the agent runtime.

The Security Paradigm Shift: Defend the Host, Not the API

When you give an LLM the ability to execute shell commands and write to disks, your security perimeter changes entirely. The standard playbook of exposing REST APIs and defending them with OAuth tokens is insufficient.

Security in the OpenClaw architecture is built fundamentally around local containment.

By default, the Gateway enforces a strict loopback bind mode (127.0.0.1). It outright refuses external connections unless explicitly authenticated via cryptographic tokens. Remote access is designed to bypass public internet exposure entirely, relying instead on zero-trust virtual private networks. Tools like Tailscale are used to create secured, peer-to-peer SSH tunnels directly into the containerized environment.

Consider this conceptual configuration snippet demonstrating how a zero-trust mesh is enforced over the gateway process:

# Conceptual: Tailscale VPN Tunneling over the Gateway Container

services:

gateway:

image: openclaw/gateway:latest

network_mode: service:tailscale

environment:

- OPENCLAW_GATEWAY_BIND=127.0.0.1

- OPENCLAW_REQUIRE_AUTH=true

tailscale:

image: tailscale/tailscale:latest

volumes:

- tailscale-data:/var/lib/tailscale

environment:

- TS_AUTHKEY=tskey-auth-xxxxx

- TS_ROUTES=127.0.0.1/32

You are no longer defending exposed APIs; you are defending the host machine's network interface. This vastly reduces the complexity of the deployment architecture and shrinks the attack surface to almost zero.

The Takeaway for Architects

As we move further into the era of personal, stateful, and decentralized AI infrastructure, we must unlearn some of the cloud-native dogmas we’ve held since the Agile Manifesto.

For developers building proprietary AI agent systems, the Gateway architecture provides a critical blueprint:

- Decouple the ingress layer from the LLM inference layer entirely.

- Construct a localized, single-process control plane.

- Bind strictly to loopback ports and rely on VPNs for remote access.

In Part 2 of this series, we will dive deeper into the core execution engine and explore why vector databases and RAG pipelines are failing us, and how a Hierarchical Markdown Memory Architecture (HMMA) provides deterministic agent behavior.