The Enterprise Never Conformed to the Software. It Never Will.

Gary's COBOL job runs at 2 AM. It has no documentation. He's retiring in November. This is why product-led growth fails in enterprise — and why Forward Deployed Engineering exists.

The Enterprise Never Conformed to the Software. It Never Will.

In 2019, a Red Hat field engineer I know spent four months embedded at a regional bank in the American Midwest. His job, technically, was to migrate their workloads to OpenShift. In practice, he spent the first six weeks untangling a custom COBOL batch job that ran every night at 2 AM, had no documentation, and that exactly one person at the bank — a sixty-three-year-old systems programmer named Gary — fully understood. Gary was retiring in November.

The migration didn't fail. But it didn't happen the way the sales deck described either. It happened because a skilled engineer sat inside that bank's reality long enough to understand what Gary understood, translate it into something OpenShift could work with, and make the transition survivable for everyone who came after.

That's the job. Not the job as marketed. The actual job.

The Assumption That Broke Product-Led Growth

For most of the last decade, the enterprise software industry operated on a clean fiction: that complexity could be abstracted away. Self-serve onboarding, frictionless trials, bottom-up adoption — the Product-Led Growth playbook assumed that if you built the product well enough, enterprises would bend toward it. And for simpler software — project trackers, communication tools, lightweight analytics — that was true enough to build companies worth billions.

But somewhere around 2022, the industry started hitting a wall it couldn't charm its way past.

The wall is this: most enterprise technology value is deeply entangled with organizational history. The Db2 database that nobody wants to migrate isn't just a technical artifact — it's thirty years of business logic, compliance decisions, and audit trails that the organization is legally required to maintain. The MQ message broker connecting three legacy applications isn't just middleware — it's the nervous system of a supply chain that processes two billion dollars in transactions annually. Pull the wrong thread and the whole thing comes apart.

Forward Deployed Engineers exist because someone finally admitted this out loud. You cannot deploy complex enterprise software by handing it to the customer and hoping they figure it out. You have to go in, understand the existing system on its own terms, and build the bridge from where the customer actually is to where the technology needs them to be.

Palantir figured this out first, in intelligence and finance, and the model spread. But the companies where FDE has become structurally essential are not primarily AI labs. They're IBM, Red Hat, Microsoft, SAP, Accenture — the companies doing the unglamorous, load-bearing work of enterprise modernization.

What Enterprise FDE Actually Looks Like

The public narrative around Forward Deployed Engineering skews heavily toward AI. OpenAI FDEs deploying RAG pipelines. Anthropic FDEs building model context servers. The coverage is vivid and the compensation figures are dramatic.

What gets less coverage is the Red Hat engineer in a manufacturing plant in Stuttgart configuring Ansible playbooks to automate infrastructure that previously required three full-time administrators. Or the Microsoft FastTrack engineer spending two weeks mapping a company's Active Directory to Azure Entra ID before a single user can sign in to M365. Or the IBM consultant building a DataStage pipeline that bridges a DB2 warehouse with a Kafka stream while keeping the existing COBOL-based reporting system alive in parallel.

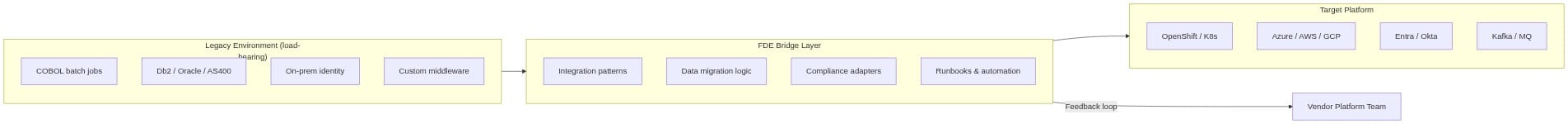

These engagements share a structural pattern:

The FDE is the bridge. Not a translator — a constructor. They write production code that lives in the customer's environment, often indefinitely, doing work that no standard product feature was designed to do.

What makes this hard is not the technology. It's the context. Every enterprise has a unique combination of technical debt, compliance constraints, organizational politics, and institutional memory. The FDE has to understand all of it before writing a single line of code. Gary's COBOL job wasn't documented because Gary was the documentation.

The Redundant Work Problem Nobody Is Solving

Here's what I've come to believe after watching this pattern across dozens of engagements: about forty percent of what enterprise FDEs build, they've built before.

Not identically. But structurally. The Db2-to-Postgres migration at Bank A has a different schema and different compliance requirements than the one at Bank B. But the approach — the sequencing, the data validation logic, the cutover strategy, the rollback procedure — is substantially similar. The IBM FDE doing their fifth financial services data migration is reinventing a wheel their colleagues already built twice this quarter, in different teams, in different countries, with no mechanism to share what they learned.

This is the real cost of the enterprise FDE model. Not the compensation (though that's real). The structural waste of bespoke knowledge that dies when the engagement ends.

The Salesforce-to-Snowflake connector is the canonical example used in cloud-native FDE circles. But in enterprise FDE circles, the equivalent is more like:

- The LDAP-to-Entra bridge that handles nested group structures nobody documented

- The DataStage job template for regulatory reporting that handles fiscal year boundaries correctly

- The Ansible playbook pattern for zero-downtime OpenShift node drains in environments that can't tolerate maintenance windows

- The IBM MQ topology for active-active high availability that works with the specific version of WebSphere the customer refuses to upgrade

Every one of these exists in multiple forms, across multiple teams, at multiple companies. None of them are being systematically captured and reused.

Why The Tooling Has Been Wrong

When people talk about FDE infrastructure, they default to Kubernetes operators. The mental model comes from the AI lab FDE experience, where the deployment environment is cloud-native, the toolchain is modern, and the customer has already adopted container orchestration.

That mental model fails for enterprise FDE almost immediately.

The IBM engineer doing a mainframe modernization engagement is not running a Kubernetes operator in the customer environment. They have SSH access to a RHEL server, a VPN connection that drops every four hours, and a change management process that requires two weeks of lead time to install new software. The Microsoft FastTrack engineer configuring Azure Landing Zones is scripting against ARM REST APIs and PowerShell, not deploying CRDs.

The right tooling for enterprise FDE doesn't assume a target runtime. It meets FDEs where they already are. That means:

# Platform-agnostic pattern: pulled from a registry, runs anywhere

from fde_patterns import Pattern, Context

# FDE discovers a validated pattern from previous engagements

ldap_bridge = Pattern.load("ldap-to-entra-nested-groups", version="2.1.0")

# Parameterized for this specific customer context

ctx = Context(

source_ldap_host=customer.ldap_server,

target_tenant=customer.azure_tenant_id,

compliance=["sox", "gdpr"],

group_depth_limit=8 # customer-specific constraint discovered on-site

)

# Deploys however the environment allows — API, script, Ansible, whatever

ldap_bridge.deploy(ctx, runtime="ansible")Not YAML. Not CRDs. Code, because FDEs write code. A language library that encapsulates proven patterns, accepts customer-specific parameters, and deploys via whatever mechanism the customer environment actually supports. The runtime is an adapter, not the foundation.

What Thought Leadership Gets Wrong About This

Most writing about Forward Deployed Engineering treats it as a delivery model for cutting-edge technology. The narrative is: complex new system (AI, autonomous vehicles, next-gen data infrastructure) requires embedded experts because customers can't operationalize it alone.

That narrative is not wrong. But it captures maybe thirty percent of the FDE market by volume.

The larger, quieter market is enterprise modernization. The Red Hat customer on RHEL 7 who needs to reach RHEL 9 without breaking production. The IBM customer whose application dependencies are so tangled that no automated migration tool has succeeded. The Microsoft customer whose Active Directory was built over fifteen years by twelve different administrators who all had different opinions about OU structure.

These customers are not on the bleeding edge. They are managing infrastructure that the rest of the industry has largely moved past but that they cannot simply abandon. Their FDE needs are just as real, just as expensive, and just as systematically underserved.

The difference is that serving them requires a different philosophy about what FDE tooling should be.

Cloud-native FDE: the tool shapes the environment Enterprise FDE: the environment shapes the tool

This is the inversion the industry hasn't fully accepted. The K8s operator works because it assumes a certain kind of environment exists and deploys into it. Enterprise FDE tooling has to work backwards — discover what the environment is, understand its constraints, and adapt the pattern accordingly.

The Question Worth Sitting With

I don't think enterprise FDE tooling exists yet in a form that systematically solves this problem. There are internal wikis at IBM and Red Hat and Microsoft containing hard-won knowledge that never leaves those organizations. There are senior FDEs carrying six years of accumulated pattern knowledge in their heads who represent flight risks to every team that relies on them.

The question I keep returning to is this: what does it cost the enterprise software industry to keep treating field intelligence as a byproduct rather than a primary output?

The Gary problem — the sixty-three-year-old who's the only person who understands the thing — is not a technology problem. It's a knowledge management problem. FDE infrastructure, done right, is how you solve it before Gary retires and takes the institution's memory with him.

That's not a Kubernetes operator. That's something more fundamental: a systematic way to capture what experienced engineers learn in the field and make it available to the next person walking into the same mess.

Whether that's a platform, a registry, a community, or something that doesn't have a name yet — that's the honest question. The tooling should follow the answer, not precede it.

What's the most expensive piece of institutional knowledge your organization is currently keeping alive in one person's head?