Why Enterprise Can't Just Copy the AI Lab FDE Model

OpenAI and Anthropic's FDE models look nothing like what works at a Stuttgart manufacturing plant. The constraints are different. The infrastructure needs to be too.

Why Enterprise Can't Just Copy the AI Lab FDE Model

In 2019, a Red Hat field engineer spent four months embedded at a regional bank in the American Midwest. His job, technically, was to migrate their workloads to OpenShift. In practice, he spent the first six weeks untangling a custom COBOL batch job that ran every night at 2 AM, had no documentation, and that exactly one person at the bank — a sixty-three-year-old systems programmer named Gary — fully understood. Gary was retiring in November.

The migration didn't fail. But it didn't happen the way the sales deck described. It happened because a skilled engineer sat inside that bank's reality long enough to understand what Gary understood, translate it into something OpenShift could work with, and make the transition survivable for everyone who came after Gary left.

That's the job. Not the job as marketed. The actual job.

If you've read the first post in this series, you know the argument: AI labs have built a proven FDE model that enterprise organizations are now trying to replicate — and the economics of that model break without better infrastructure. This post is for the people trying to figure out what that infrastructure needs to look like, and running into a problem they can't quite name.

The problem is this: every architectural pattern for FDE infrastructure assumes the AI lab environment. It assumes the customer has adopted Kubernetes, or is willing to. It assumes modern observability tooling. It assumes a change management process that moves in days, not months. Those assumptions hold for OpenAI-sf-san-francisco/) deploying a RAG pipeline to a VC-backed startup. They do not hold for a Red Hat engineer configuring Ansible playbooks in a Stuttgart manufacturing plant, or a Microsoft FastTrack engineer mapping fifteen years of accumulated Active Directory policy to Azure Entra ID.

The difference isn't scale. It's directionality.

In AI lab FDE: the tool shapes the environment. Deploy the operator, and the customer environment must accommodate it. This works because the customer chose the AI lab's platform and accepted its architectural requirements.

In enterprise FDE: the environment shapes the tool. The IBM engineer arrives at a bank with SSH access, a VPN that drops every four hours, and a change management queue that requires two weeks of lead time to install new software. Their tooling has to work with what's there. The environment is not going to change to accommodate the tooling.

This inversion is the reason you cannot copy the AI lab FDE model into enterprise contexts and expect it to work. And it's the architectural constraint that any serious enterprise FDE platform has to start from — not paper over.

The Environment-Shapes-Tool Inversion

It helps to make this concrete.

An OpenAI field engineer deploying a custom GPT integration to a Series B SaaS company is working in an environment where the customer has already made architectural commitments: cloud-native, Kubernetes-orchestrated, modern DevOps toolchain, relatively permissive change management. The engineer arrives with a toolkit tuned for that environment. When the environment doesn't quite fit, the customer can usually adjust — move a workload, expose an API, grant a permission.

Now consider what the Red Hat engineer at the Midwestern bank actually faced:

- No Kubernetes cluster in the customer environment — the bank's infrastructure runs on RHEL bare metal and VMware, managed by a team that has never administered a container orchestrator

- Two-week change management lead time — every new piece of software requires a change advisory board review, security scan, and sign-off chain before it can be installed

- VPN that drops every four hours — the engineer's remote connection is unstable, so anything requiring persistent network connectivity fails silently

- Gary — the single human who understands the critical system, who is not interested in learning new deployment paradigms six months before retirement

The engineer's tooling had to work with all of this. SSH and Ansible, not Helm charts. RHEL scripts, not CRDs. Documentation that Gary would consent to write, not YAML manifests that Gary would never touch.

This isn't an edge case. It's the modal enterprise FDE environment: legacy infrastructure — on-prem RHEL, VMware, COBOL batch jobs, AS/400 systems, and MQ message brokers that pre-date modern API conventions. The AI lab environment — cloud-native, container-orchestrated, modern toolchain — is the exception in the installed base of enterprise software, not the rule. As of 2025, on-premise clusters still account for over 50% of virtualization installations, and the legacy modernization backlog is growing, not shrinking.

The architectural implication is severe: tooling built around the AI lab environment will fail in enterprise contexts not because it's poorly designed, but because it's designed for a different directionality of constraint. The tool assumes the environment will bend. Enterprise environments don't bend.

Understanding this inversion clearly is the prerequisite for designing enterprise FDE infrastructure that actually works. Trying to copy the AI lab model without naming the inversion is why so many "FDE programs" at enterprise companies end up looking like professional services with better job titles.

What Enterprise FDE Actually Looks Like

The public FDE conversation skews toward AI labs — the Palantir deployments in intelligence, the OpenAI FDEs embedding in enterprise ChatGPT rollouts. What gets less coverage is the Red Hat engineer in Stuttgart, the Microsoft FastTrack engineer doing Active Directory mapping, the IBM consultant keeping a thirty-year-old system alive while migrating workloads off it.

These engagements share a structural pattern:

The FDE is the bridge. Not a translator — a constructor. They write production code that lives in the customer's environment, often indefinitely, doing work that no standard product feature was designed to do.

The redundant work problem surfaces here with precision. Every enterprise FDE organization has some version of these four categories of work that they're solving repeatedly, in parallel, across different customers and regions:

- LDAP-to-Entra bridges that handle nested group structures nobody documented, with customer-specific attribute mappings that evolved organically over fifteen years of AD administration by people who are no longer at the company

- DataStage job templates for regulatory reporting that handle fiscal year boundary conditions correctly — the kind of edge case that a generic data pipeline tool gets wrong and that a compliance audit will surface immediately

- Ansible playbook patterns for zero-downtime OpenShift node drains in environments that can't tolerate maintenance windows — manufacturing plants, trading floors, healthcare systems where "we'll take it down for 20 minutes" is not an acceptable answer

- IBM MQ topology configurations for active-active high availability that work with the specific version of WebSphere the customer refuses to upgrade because recertification costs more than running the old version

Every one of these exists in multiple forms, across multiple teams, at multiple vendors. None of them are being systematically captured and reused. Practitioners consistently estimate the structural overlap at 30–50% of engagement work — a figure almost no organization formally tracks, which is itself part of the problem.

A senior field engineer at a large infrastructure vendor described the experience precisely: "I show up with my standard toolkit. First week, I figure out which half of it I'm not allowed to use. Second week, I figure out what I actually have to work with. Third week, I start building. And everything I build is going to be thrown away when I leave, because none of it was built with reuse in mind — because there's no infrastructure for that." (This reflects a pattern across multiple field engineering conversations; it is not a direct quote from a single identifiable individual.)

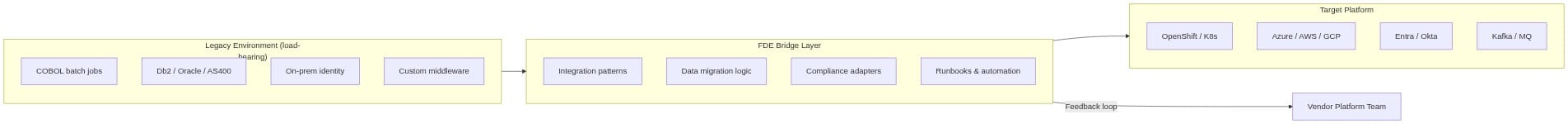

The bridge layer in that diagram — the FDE layer — is where the institutional knowledge lives. And right now, that knowledge has no structural home.

The Full Loop as Architectural Goal

AI lab FDE organizations have something enterprise FDE organizations largely don't: a structural feedback path from field work to platform improvement.

In the AI lab model, the loop looks something like this: an FDE discovers a recurring integration challenge in the field → the pattern gets extracted and abstracted → it becomes a reusable component → the next FDE starts from a higher floor. The knowledge compounds. The organization gets smarter with each deployment because the mechanism for learning is built into the model, not bolted on as an afterthought.

The third post in this series describes a formal version of this loop for a Kubernetes operator context:

FDE writes bespoke code → Operator extracts patterns → Generalized CRD → Available to all FDEsWhat matters here is not the specific technology. What matters is the principle: field intelligence becomes platform capability through a structural mechanism, not an informal one.

Most enterprise FDE organizations have no version of this loop. What they have is: good engineers who talk to each other occasionally, internal wikis that are perpetually out of date, Slack channels where institutional knowledge gets buried in scroll history, and the implicit expectation that senior FDEs will mentor junior ones by being physically present in the same room.

That's not a loop. That's hope.

The Full Loop is worth defining precisely as an architectural goal before we talk about how to implement it, because the implementation looks different depending on the runtime environment:

- Capture: every deployment generates operational signal — what worked, what failed, what edge cases emerged

- Extract: structural patterns are identified from that signal, separated from customer-specific configuration

- Abstract: the patterns are generalized into parameterized templates with explicit parameter schemas

- Review: a human FDE validates the abstraction before it enters the shared library — this step is not optional

- Distribute: the validated pattern becomes available to every FDE on the next similar engagement

- Iterate: telemetry from deployments using the pattern feeds back to improve it

This loop is what makes an FDE organization compound its knowledge rather than reset with every departure. It's what distinguishes a mature FDE function from a group of talented individuals doing isolated excellent work. And right now, it's what enterprise FDE largely lacks — not because the engineers are less capable, but because nobody has built the infrastructure for it.

The key architectural constraint is step 4: human review is mandatory before publication. Without it, the pattern library becomes a junk drawer of half-abstracted solutions that future FDEs can't trust. The review step is what makes the library a professional tool rather than a shared folder.

The question of how to implement the Full Loop is constrained by where the FDE actually operates — and that's where the environment-shapes-tool inversion bites again.

Why K8s Operators Don't Help Enterprise FDE Today

This has to be stated directly, without apology: Kubernetes operators are the wrong tool for enterprise FDE as it currently exists in most organizations.

The logic behind K8s operators for FDE is sound in the right environment. An operator is infrastructure that manages infrastructure — it watches custom resources, reconciles desired state with actual state, and automates complex operational workflows. That structural mirror to what FDEs do is elegant. In a cloud-native environment where the FDE and the customer both operate in Kubernetes, the operator pattern is genuinely useful.

But that's not where most enterprise FDEs operate.

Here are the specific failure modes, not as edge cases but as standard conditions:

No cluster in the customer environment. The IBM engineer doing mainframe modernization at a financial services firm is not working in a Kubernetes cluster. The customer runs RHEL bare metal and VMware. Installing a container orchestrator requires a procurement cycle, a security review, a change advisory board approval, and infrastructure provisioning time. None of this happens in the window of an FDE engagement. You cannot deploy a CRD to an environment that doesn't have a Kubernetes API server.

Change management latency. In enterprises with mature compliance frameworks — banking, healthcare, defense, utilities — installing new software in production environments requires documented review processes. A two-week CAB approval cycle is not a complaint; it's a regulatory requirement. A K8s operator that needs to be installed before it can do anything is not compatible with an environment where "installing new software" is a formally controlled change. The operator pattern assumes the operator can be deployed. That assumption fails regularly in regulated industries.

Compliance barriers to new orchestration tooling. Beyond approval timelines, many regulated environments have explicit restrictions on what orchestration tooling can be introduced. A financial institution that has completed a lengthy security assessment for its existing infrastructure is not casually adding a new Kubernetes distribution to its production environment. The compliance path for a net-new orchestration layer is long, expensive, and frequently not worth pursuing for a single FDE engagement.

Network topology incompatibility. The operator control plane needs to reach the customer namespace. If the customer operates in a segmented network — which is common in financial services, healthcare, and defense — the operator's assumption about network reachability may simply be wrong. Four-hour VPN drops are not an edge case; they're what an air-gapped-adjacent enterprise network looks like from the outside.

None of this is a criticism of the operator pattern. The pattern is sound. The problem is the assumption about where the pattern can be deployed. In AI lab FDE, that assumption is generally safe. In enterprise FDE, it fails frequently enough to be the rule rather than the exception.

The enterprise FDE architecture that would actually work starts from different foundations: platform-agnostic runtime adapters (Ansible, ARM templates, PowerShell, SSH scripts — whatever the environment actually supports), a pattern registry that is decoupled from any specific deployment runtime, and organizational incentives that make contributing knowledge to the registry feel like building career capital rather than fulfilling a compliance obligation.

What that architecture looks like in code is sketched in the source material [ARCHITECTURE.md]:

# Platform-agnostic pattern: pulled from a registry, runs anywhere

from fde_patterns import Pattern, Context

# FDE discovers a validated pattern from previous engagements

ldap_bridge = Pattern.load("ldap-to-entra-nested-groups", version="2.1.0")

# Parameterized for this specific customer context

ctx = Context(

source_ldap_host=customer.ldap_server,

target_tenant=customer.azure_tenant_id,

compliance=["sox", "gdpr"],

group_depth_limit=8 # customer-specific constraint discovered on-site

)

# Deploys however the environment allows — API, script, Ansible, whatever

ldap_bridge.deploy(ctx, runtime="ansible")Not YAML. Not CRDs. Code, because FDEs write code. A language library that encapsulates proven patterns, accepts customer-specific parameters, and deploys via whatever mechanism the customer environment actually supports. The runtime is an adapter, not the foundation.

This architecture largely doesn't exist yet for enterprise. It needs to be built. And the K8s operator isn't the blueprint — it's a useful starting point for a specific subset of the problem.

The Deterministic/Probabilistic AI Border

As AI tooling enters FDE workflows — LLM-generated integration code, AI-assisted pattern matching, automated abstraction proposals — there's an architectural boundary that can't be left implicit.

Probabilistic AI outputs (LLM-generated code, recommendations, pattern summaries) need deterministic guardrails before they affect production customer systems. This is not safety theater for the compliance team. It's the thing that makes enterprise procurement actually sign off on AI-assisted FDE tooling.

The specific mechanisms are:

Schema validation. AI-generated integration configurations are validated against a strict schema before deployment. The LLM proposes a pattern; the pattern must conform to a typed schema with known-valid parameter ranges before it's executed. This is the boundary between "the AI suggested something" and "the system acted on it."

Approval gates. Any AI-proposed pattern change — a new abstraction, a modification to an existing primitive — requires human FDE review before it enters the shared library. The Full Loop's step 4 is not a rubber stamp; it's the mechanism that keeps AI-generated patterns from degrading the library's integrity. The gate is structural, not cultural.

Circuit breakers. Telemetry-driven rollback if a deployed AI-assisted integration exceeds error thresholds within a defined window (24 hours is a reasonable starting point; the exact threshold is environment-dependent). If an integration built using an AI-proposed pattern starts failing at a rate that exceeds normal tolerance, the circuit breaks and the deployment rolls back automatically.

These three mechanisms map to the three ways AI-assisted FDE can go wrong: the AI generates a structurally invalid pattern (schema validation catches it), the AI generates a structurally valid but conceptually wrong pattern (approval gate catches it), and the AI generates a pattern that looks right but fails in the specific customer environment (circuit breaker catches it).

If an enterprise FDE organization isn't prepared to specify and implement these mechanisms concretely, the right answer is not to skip the AI-assistance layer — it's to not introduce AI-generated patterns into production customer systems until the guardrails are in place. The risk isn't theoretical. Enterprise customers with compliance obligations will ask, specifically, what controls exist on AI-generated integration code. The answer needs to be concrete.

What the Architecture Would Actually Need

To summarize what an architecture for enterprise FDE — the enterprise FDE that doesn't yet exist as a discipline — would actually require:

Platform-agnostic pattern registry. The pattern library cannot be tied to a specific deployment runtime. Patterns need to be parameterized templates that can be instantiated via Ansible, ARM, PowerShell, SSH scripts, or K8s CRDs, depending on what the customer environment supports. The registry is the stable layer; the runtime is the adapter.

Runtime adapters, not a prescribed runtime. The IBM engineer doesn't have a K8s cluster. The Microsoft FastTrack engineer does have Azure APIs. The Red Hat engineer has Ansible. The architecture needs to meet each of them where they are. That means adapter interfaces for each major runtime, not a single prescribed deployment mechanism.

Knowledge capture that feels like career capital. The Full Loop only works if FDEs actually contribute to it. Mandatory documentation that feels like administrative overhead will be gamed and ignored. The mechanism for pattern contribution needs to be integrated into the workflow itself — automatic signal capture, LLM-assisted abstraction proposals, lightweight human review — so that contributing to the library is the path of least resistance, not an additional burden.

Organizational model for the review layer. Someone has to maintain pattern quality. The governance model — who reviews, who approves, how patterns get deprecated, what maturity levels mean — needs to be defined before the pattern library exists, not after it's filled with untrusted patterns that nobody uses.

None of this exists in a packaged form for enterprise today. There are internal wikis. There are internal libraries at large vendors. There are knowledge management systems that FDEs technically have access to and practically don't use. There is not a platform designed from the ground up to make the Full Loop work in environments where K8s operators can't be deployed.

That's the honest state of the infrastructure gap.

What This Means for the Operator

Post 5 of this series describes a Kubernetes operator for Forward Deployed Engineering. Before you read it, the honest framing is this:

The operator is not the architecture enterprise FDE needs today. The IBM engineer in Stuttgart, the Microsoft FastTrack engineer mapping Active Directory, the Red Hat engineer spending six weeks with Gary — none of them are served by a K8s operator today. The environments they work in can't run one.

What the operator is is a beachhead: proof of the Full Loop concept in environments where it can work right now. Cloud-native FDE organizations — AI labs, defense tech companies, modern enterprises that have already adopted Kubernetes as standard infrastructure — can use the operator to implement the Full Loop today. Every concept it proves — the CRD schema design, the pattern extraction logic, the governance model, the telemetry feedback path — is a concept that a more general enterprise FDE platform will eventually need to generalize beyond Kubernetes.

The operator being described in the next post is explicitly scoped to cloud-native FDE — and understanding why that scoping matters is the point.

In the previous post, we established that enterprise FDE has a structural cost problem that infrastructure has to solve. This post names the architectural constraint: the tool can't assume the environment. The next post describes an operator that works within that constraint — for the environments where it can — and explains what building it teaches us about the enterprise architecture that comes next.